Nvidia has just rolled out its next-generation Rubin chip architecture, and it is a big deal in the fast-paced world of artificial intelligence hardware. This exciting announcement came during a major global tech event, where they introduced a platform built to handle the skyrocketing computational needs of cutting-edge AI models, data centers, and what are being called “AI factories” that drive generative and agentic systems.

Named in honor of the groundbreaking astronomer Vera Rubin, the new architecture takes over from Nvidia’s Blackwell platform and marks a significant move towards more integrated system-level design. Instead of just zeroing in on GPUs, Rubin combines a carefully co-designed array of components, which includes a new GPU, CPU, networking, data processing units, and memory optimized for AI, all into a cohesive computing architecture tailored for handling large-scale AI tasks.

At the core of the platform lies the Rubin GPU, which Nvidia boasts offers a remarkable boost in both performance and efficiency. This chip can tackle enormous training and inference tasks with far fewer processors than its predecessors, which means it significantly cuts down on energy use and operating expenses. Nvidia asserts that Rubin can train intricate AI models using just a fraction of the hardware that is needed today, while also slashing the cost per token for inference tasks.

Rubin has also rolled out a new Vera CPU, crafted to work together with the GPU, making it a powerhouse for speeding up data-heavy tasks that are typical in today’s AI workflows. With cutting-edge networking technologies like the latest NVLink interconnect and sophisticated data processing units, communication between thousands of chips happens at lightning speed, enabling AI models to scale up efficiently across entire data centers.

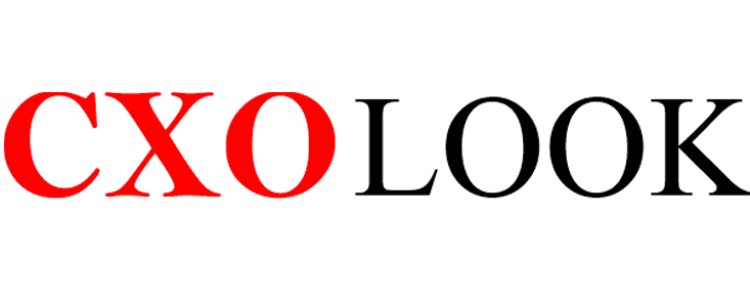

One of the standout products built on this new architecture is a rack-scale system that brings together a multitude of Rubin GPUs and Vera CPUs into one powerful unit. This system is designed for cloud providers, research labs, and businesses that are developing extensive AI infrastructures. It is anticipated to be a key player in the future of supercomputing and enterprise AI applications.

Nvidia has announced that they are already in the process of producing systems based on Rubin technology, with a wider rollout anticipated in the latter half of 2026. Major cloud platforms and AI developers are expected to be among the first to jump on board as the competition heats up in the global AI infrastructure market.

Nvidia’s latest launch really highlights their shift from just focusing on individual chips to creating comprehensive AI platforms. As AI models become more complex and impactful in the real world, the Rubin architecture is set to keep Nvidia at the forefront of the global competition to develop faster, more efficient, and scalable artificial intelligence systems.